CG Card

The CG card defines the method to solve the matrix equation.

On the Solve/Run tab, in the Solution

settings group, click the ![]() Preconditioner icon.

Preconditioner icon.

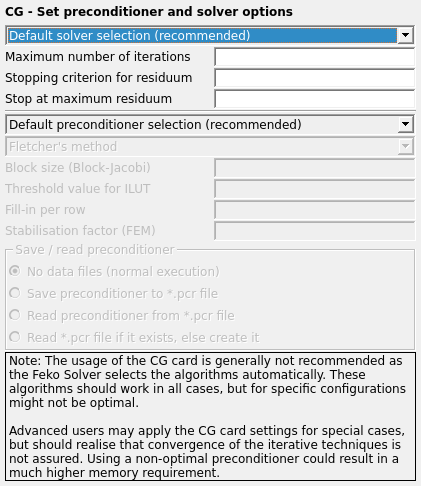

Figure 1. The CG - Set preconditioner and solver options dialog.

Normally the CG card should not be used. Feko automatically selects optimal solution techniques, preconditioners and other options depending on the problem type. These algorithms should be sufficient in all cases, but they might not be optimal for specific MLFMM and FEM configurations that use iterative solvers.

Parameters:

- Default solver selection (recommended). When this option is selected, then Feko will automatically select a suitable solver along with all its required parameters. The choice depends on whether Feko is executed sequentially or in parallel, but also which solution method is employed (for example direct LU decomposition solver for the MoM while an iterative solver is used for MLFMM or FEM). This option has, regarding the solver type, the same effect as not using a CG card, but still allows the user to change the default termination criteria for the iterative solver types, or to change the preconditioner.

- Gauss elimination (LINPACK routines) Use Gauss elimination from the LINPACK routines.

- Conjugate Gradient Method (CGM)

- Biconjugate gradient method (BCG)

- Iterative solution with band matrix decomposition

- Gauss elimination (LAPACK routines) Use Gauss elimination from the LAPACK routines.

- Block Gauss algorithm (matrix saved to disk) The block Gauss algorithm is used (in case the matrix has to be saved on the hard disk, for example when a sequential out-of-core solution is performed).

- CGM (Parallel Iterative Method)

- BCG (Parallel Iterative Method)

- CGS (Parallel Iterative Method)

- Bi-CGSTAB (Parallel Iterative Method)

- RBi-CGSTAB (Parallel Iterative Method)

- RGMRES (Parallel Iterative Method)

- RGMRESEV (Parallel Iterative Method)

- RCGR (Parallel Iterative Method)

- CGNR (Parallel Iterative Method)

- CGNE (Parallel Iterative Method)

- QMR (Parallel Iterative Method)

- TFQMR (Parallel Iterative Method)

- Parallel LU-decomposition (with ScaLAPACK routines). The parallel LU decomposition with ScaLAPACK (solution in main memory) or with out-of-core ScaLAPACK (solution with the matrix stored to hard disk). This is the default option for parallel solutions and normally the user need not change it.

- QMR (QMRPACK routines)

- Direct sparse solver. Direct solution method for the ACA or FEM

(no preconditioning).

When using the parallel Solver, the factorisation type can be specified.

- Maximum number of iterations

- The maximum number of iterations for the iterative techniques.

- Stopping criterion for residuum

- Termination criterion for the normalised residue when using iterative methods. The iterative solver will stop when the normalised residue is smaller than this value.

- Stop at maximum residuum

- For the parallel iterative methods, the solution is terminated when the residuum becomes larger than this value. The iterative solution will stop with an error message indicating that the solution has diverged.

- Preconditioners

-

- Default preconditioner

- Feko will automatically select a suitable preconditioner and its required parameters. The choice depends on whether a parallel solution is performed and the solver method. This option has the same effect as not using a CG card, but still allows selecting other options, for example the residuum settings.

- No preconditioning

- No preconditioning is used. This is option is not recommended with methods that use iterative solvers.

- Scaling the matrix A

- Scaling the matrix [A], so that the elements on the main diagonal are all normalised to one.

- Scaling the matrix [A]H[A]

- Scaling the matrix [A]H[A], so that the elements on the main diagonal are all normalised to one.

- Block-Jacobi preconditioning using inverses

- The inverses of the preconditioner are calculated and applied during every iteration step. For performance reasons Block-Jacobi preconditioning using LU-decomposition is recommended.

- Neumann polynomial preconditioning

- Self explanatory.

- Block-Jacobi preconditioning using LU-decomposition

- Block-Jacobi preconditioning where for each block an LU-decomposition is computed in advance, and during the iterations a fast backward substitution is applied.

- Incomplete LU-decomposition

- Use an incomplete LU-decomposition of the matrix as a preconditioner.

- Block-Jacobi preconditioning of MLFMM one-level-up

- Special preconditioner for the MLFMM, where additional information is included in the preconditioner.

- LU decomposition of FEM matrix

- An LU decomposition of the FEM matrix is used

as preconditioner. This option will require more memory than the default iterative

solution.

When using the parallel Solver, the factorisation type can be specified.

- ILUT decomposition of FEM matrix

- An incomplete LU decomposition with thresholding of the FEM matrix is used as preconditioner.

- Multilevel ILUT/Diagonal decomposition of the FEM matrix

- Preconditioner for a hybrid FEM/MoM solution. A multilevel sparse incomplete LU-decomposition with thresholding and controlled fill-in is applied as preconditioner.

- Multilevel ILUT/ILUT decomposition of the FEM matrix

- Self explanatory

- Multilevel LU/Diagonal decomposition of the FEM matrix

- Preconditioner for a hybrid FEM/MoM solution. A multilevel sparse LU decomposition of

the partitioned system is applied as preconditioner.

When using the parallel Solver, the factorisation type can be specified.

- Multilevel FEM-MLFMM LU/diagonal decomposition

- Preconditioner for a hybrid FEM/MLFMM solution. A multilevel sparse LU decomposition

of the combined, partitioned, FEM/MLFMM system is applied as preconditioner.

When using the parallel Solver, the factorisation type can be specified.

- Multilevel FEM-MLFMM ILUT/diagonal decomposition

- Preconditioner for a hybrid FEM/MLFMM solution. A multilevel sparse incomplete LU decomposition with thresholding of the combined FEM/MLFMM system is applied as preconditioner. It should employ less memory than the Multilevel FEM/MLFMM LU/diagonal decomposition, but at risk of slower or no convergence.

- Multilevel FEM-MLFMM ILU(k)/diagonal decomposition

- Preconditioner for a hybrid FEM/MLFMM solution. A multilevel sparse incomplete LU decomposition, with controlled level of fill, of the combined FEM/MoM system is applied as preconditioner. It should require less memory than the Multilevel FEM/MLFMM LU/diagonal decomposition, but at the risk of slower or no convergence.

- Multilevel FEM-MLFMM diagonal domain LU decomposition

- Preconditioner for a hybrid FEM/MLFMM solution. A block-diagonal sparse LU

decomposition of the combined FEM-MLFMM system is applied as preconditioner. It will

require less memory than the Multilevel FEM-MLFMM LU/diagonal decomposition, but at a

high risk of slower or no convergence.

When using the parallel Solver, the factorisation type can be specified.

- Sparse Approximate Inverse (SPAI) preconditioner

- Preconditioner which can be used in connection with the MLFMM.

- Sparse LU preconditioning for MLFMM

- Use a sparse LU decomposition of the matrix (MLFMM/ACA) as a

preconditioner.

When using the parallel Solver, the factorisation type can be specified.

- Accelerated SPAI (faster, possibly more iterations)

- This option enables the use of the accelerated SPAI preconditioner. It is faster, but could require more iterations for convergence.

- Options for the Biconjugate Gradient Method

-

- Fletcher’s method

- Jacob’s method

- Fletcher’s method, pre-iteration using Fletcher’s method

- Fletcher’s method, pre-iteration using Jacob’s method

- Jabob’s method, pre-iteration using Fletcher’s method

- Block size (Block-Jacobi)

- The block size to be used for Block-Jacobi preconditioning. When the input field is left empty, appropriate standard values are used for the block preconditioners.

- Threshold value for ILUT

- This is the thresholding value used for the FEM in connection with the ILUT preconditioners.

- Level-of-fill

- This is used by the MLFMM during the iterative matrix solution in connection with the incomplete LU preconditioner. The recommended range for this parameter is between 0 and 12. Feko will choose the value for the best preconditioning, but if the size of the incomplete LU preconditioner is too large to fit into memory, it can be reduced by reducing the level-of-fill. It should be noted that a lower level-of-fill might result in a slower convergence or even divergence in the iterative solution. This preconditioner can only be used in a sequential solution.

- Fill-in per row

- This is used by the FEM during the iterative matrix solution in connection with the incomplete LU preconditioners with thresholding. It sets a limit on the number of entries per row that will be included in the incomplete LU-decomposition of the preconditioner matrix.

- Stabilisation factor (FEM)

- This applies only to the incomplete LU preconditioners of the FEM and can be used to get better convergence for the FEM in critical cases (the value range is between 0 and 1).

- Factorisation for parallel execution

- This advanced option only applies when using the parallel Solver and allows you to select between using standard

full-rank factorisation or block low-rank (BLR) factorisation. Using block

low-rank (BLR) factorisation for some classes of models with the MLFMM or FEM

solution methods, can reduce the factorisation complexity and the memory footprint

of the sparse LU-based preconditioners.

- Default

- If this option is selected, the predefined factorisation type adopted by the Solver is applied.

- Auto

- If this option is selected, the factorisation type is determined automatically based on the model.

- Use standard full-rank factorisation

- If this option is selected, standard full-rank factorisation is applied.

- Use block low-rank (BLR) factorisation

- If this option is selected, block low-rank (BLR) factorisation is applied.

- Save/read preconditioner

- For the incomplete LU preconditioners used with the FEM the preconditioner can be computed only once and written to a .pcr file. Then for a subsequent solution it can be read from this file saving runtime. Since the FEM preconditioners depend only on the FEM part of the matrix, this method is useful when only the MoM part of a FEM/MoM problem has changed.